Entertainment industry voices urge for legal protections against AI-generated likenesses

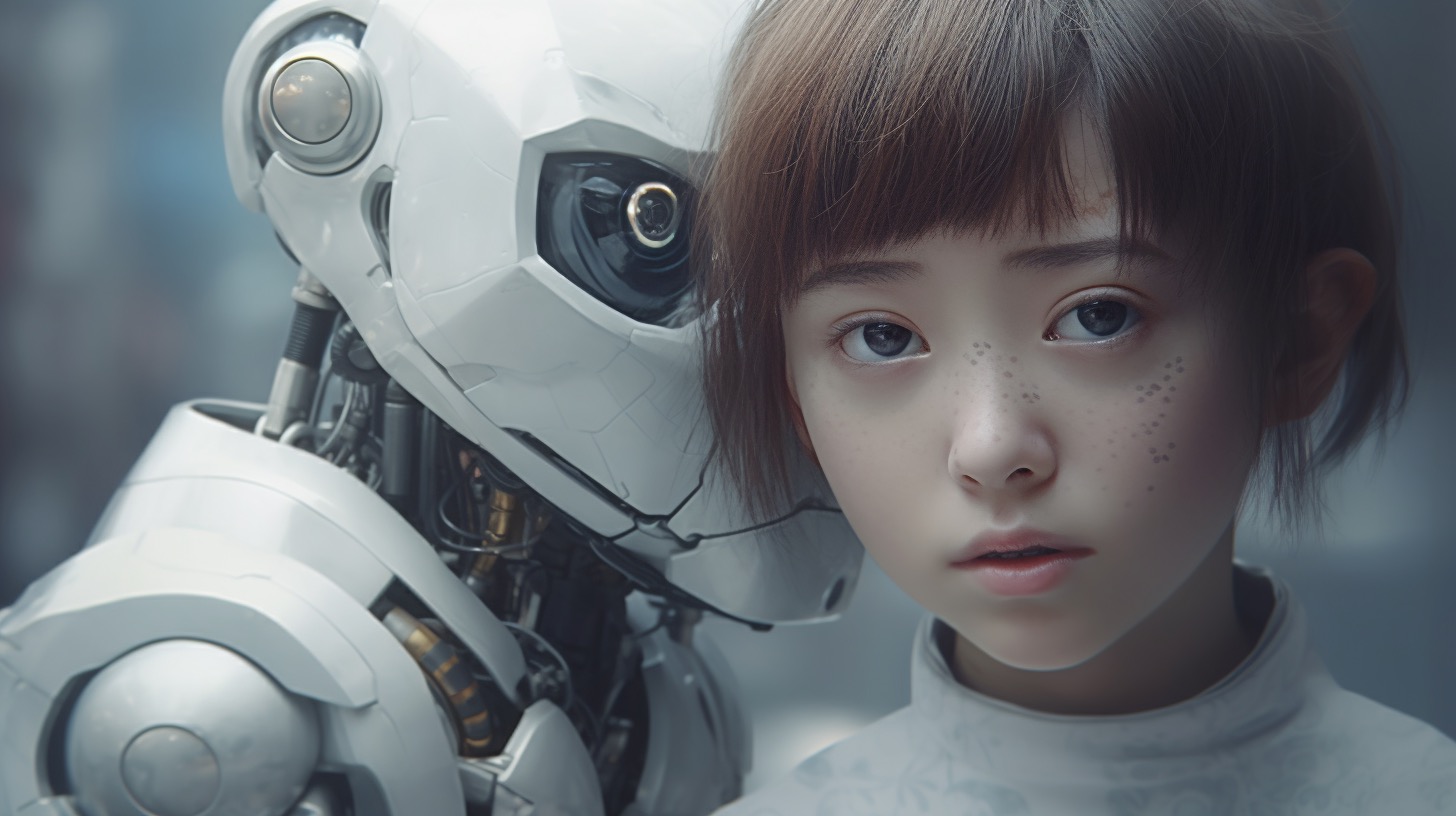

Following the unnervingly realistic re-creation of actress Scarlett Johansson’s voice through AI technology, there has been a surge in the demand for legal measures to govern the use of such deepfakes. In the wake of these concerns, prominent members of the AI sector are recommending that Congress enact specific laws to safeguard individuals from unauthorized digital impersonations.

A notable voice in this initiative is BSA Software Alliance, which incorporates influential entities like Microsoft, a substantial investor in OpenAI. They recently expressed an official standpoint advocating for a legally enshrined right that would prevent exploitation of digital doubles without consent, emphasizing the importance of safeguarding an artist’s name, voice, and likeness from undistinguishable counterfeit distribution.

As varying proposals for AI regulation float through Congress, the urgency for legislative action is palpable. The Screen Actors Guild‐American Federation of Television and Radio Artists (SAG-AFTRA) endorses the “No Fakes Act,” pushing for the prohibition of creating or sharing such digital forgeries without permission. Delaware Senator Chris Coons, a co-sponsor, is preparing to introduce a refined draft of the bill shortly.

BSA’s suggestions, however, lean towards a focused legislative path facilitating the removal of such content. Additionally, they champion excluding service providers from being held accountable for user actions, while at the same time proposing a ban on the tools primarily designed for generating unlawful deepfakes, provided they do not interfere with AI’s positive applications.

The legislative landscape at state levels has begun to evolve, with Tennessee enacting the “ELVIS Act” curbing unauthorized voice cloning, joined by other states considering similar measures. Nevertheless, entities like the Motion Picture Association are cautioning against the potential implications on free speech rights.

With the specter of problematic digital replicas looming, the unified message from industry stakeholders to legislators is clear: Establishing a consistent federal guideline is essential for both the protection of individual identities and the flourishing of creative innovations.

Important Questions and Answers:

1. What is a “deepfake”?

A deepfake is an artificial intelligence-generated video, image, or audio recording that seems realistic but is manipulated to show someone saying or doing something they did not actually say or do.

2. Why is legislation for AI and deepfakes necessary?

Legislation is necessary to protect individuals from unauthorized use of their likeness, prevent the spread of misinformation, and protect free speech while balancing it with privacy and personal rights.

3. What challenges are associated with deepfake legislation?

Key challenges include defining legal standards for consent, addressing the speed of technological development, ensuring that laws do not stifle innovation or infringe on free speech, and establishing effective enforcement mechanisms for these laws.

Key Challenges and Controversies:

– Defining Consent: Creating laws that detail how and when consent must be obtained for using a person’s likeness can be complex. There are gray areas such as satire, parody, and public interest exceptions that need to be clearly defined.

– Enforcement: Identifying and prosecuting offenders can be difficult, especially because the internet has no borders and deepfakes can be created and disseminated from anywhere in the world.

– Balancing Innovation and Regulation: There is a concern that too much regulation could hinder the development of AI technologies that have beneficial uses.

– Free Speech Implications: Legislation must carefully consider First Amendment rights to ensure that legal protections against deepfakes do not become tools for censorship.

Advantages and Disadvantages:

Advantages:

– Protects individuals’ rights to their image and voice.

– Aims to prevent the spread of misinformation.

– Encourages responsible innovation in AI technology.

Disadvantages:

– Could impact creative expression and free speech.

– Might be difficult to enforce internationally.

– Risks becoming outdated quickly due to the rapid pace of technological change.

Finally, for readers seeking more insights on AI and related topics, here are some trusted organizations and websites that you can visit:

– BSA Software Alliance

– Microsoft

– OpenAI

– Screen Actors Guild‐American Federation of Television and Radio Artists (SAG-AFTRA)

– Motion Picture Association