Ambarella, Inc., an edge AI semiconductor company, is demonstrating its multi-modal large language models (LLMs) running on its new N1 SoC series. The N1 SoCs provide significant power-per-inference efficiency compared to leading GPU solutions, enabling the deployment of generative AI technology in edge endpoint devices and on-premise hardware.… Read the rest

SecFormer: Balancing Performance and Efficiency in Privacy-Preserving Inference for Transformer Models

A new framework called SecFormer has been introduced to address the challenge of Privacy-Preserving Inference (PPI) for large language models based on the Transformer architecture. The increasing reliance on cloud-hosted large language models has raised privacy concerns, especially when sensitive data is involved.… Read the rest

The Future of Education: Embracing Technology for Enhanced Learning

In today’s rapidly evolving world, education must adapt and embrace technological advancements to prepare students for the future. Ireland is at the forefront of this transformation, with the government announcing the expansion of a scheme that provides free technological devices to students.… Read the rest

New AI Testing Platform Helps Ensure Reliable Results from Language Models

Summary:

With the rapid advancement of generative AI (genAI) platforms, there is a growing concern about the reliability of the large language models (LLMs) that power these systems. As LLMs become more adept at mimicking natural language, it becomes increasingly difficult to discern between real and fake information.… Read the rest

New Era of Artificial Intelligence: Understanding the Deep Structure of Perception

In the year 2023, artificial intelligence (AI) is set to take a massive leap forward. However, the implications of this advancement remain uncertain. While some predict a utopian future, others fear apocalyptic consequences. The rapid progress in large language models like ChatGPT has opened up possibilities for AI to revolutionize various fields such as education and healthcare.… Read the rest

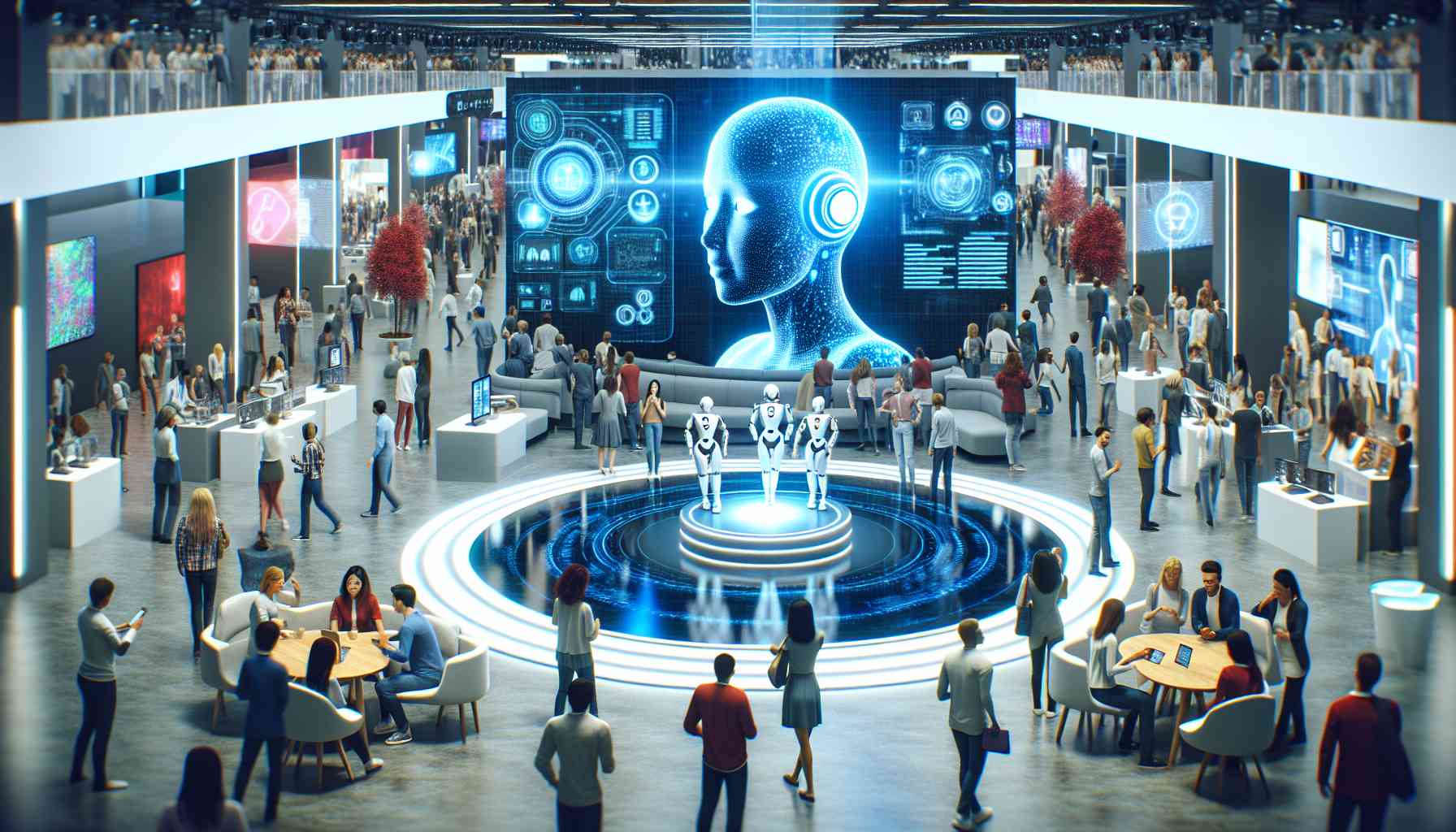

Artificial Intelligence Takes Center Stage at CES 2024

Artificial intelligence (AI) is set to dominate the conversation at CES 2024, with innovative applications appearing in various sectors, including smart home products, laptops, and robots. Over the past year, there has been a surge in the use of generative AI models, such as Google’s Gemini and OpenAI’s ChatGPT-4, powering chatbots, image generators, and office products.… Read the rest

Generative AI Chatbots Revolutionize Technology Landscape

In a remarkable turn of events, OpenAI’s ChatGPT, the cutting-edge generative AI chatbot, has disrupted the tech industry in unprecedented ways. Just five days after its launch in November 2022, ChatGPT garnered a staggering one million users. The mind-blowing growth continued as it attracted an astonishing 100 million users within just two months.… Read the rest

Efficient Training on Supercomputers: NVIDIA vs. AMD and Intel

In a recent research paper, computer engineers at Oak Ridge National Laboratory detailed their successful training of a large language model (LLM) on the Frontier supercomputer. What’s notable is that they achieved impressive results while using only a fraction of the available GPUs.… Read the rest

The Impact of Artificial Intelligence on Job Skills and Career Paths

The rise of artificial intelligence (AI) may bring about automation, but it is also creating a greater demand for creativity and people skills in the workplace. As AI continues to automate routine tasks, workers will need to embrace soft skills to excel in tomorrow’s workplace, according to industry experts.… Read the rest

The Frontier Supercomputer Pushes the Boundaries of LLM Training with AMD’s EPYC CPUs & Instinct GPUs

The Frontier supercomputer, known as the world’s leading and only Exascale machine in operation, is making groundbreaking advancements in the field of large language models (LLMs). Powered by AMD’s EPYC CPUs and Instinct GPUs, the Frontier supercomputer has achieved a new industry benchmark by training one trillion parameters through hyperparameter tuning.… Read the rest